Shadow AI in Delivery: Risk, reality and the right path

27 Feb 2026

Right now, organisations are trying to turn a cruise ship. Not because they are slow. Not because they are resistant. But because when an organisation is responsible for client data, contractual obligations and commercial exposure, it cannot move at the speed of hype.

Leadership teams are fielding a steady stream of requests to 'just try this AI tool'. IT is assessing security implications and compatibility with existing systems. Governance frameworks cannot be finalised until a tool is selected, configured and its usage clearly defined. Then comes training and change communication.

It is slow. Deliberate. Occasionally frustrating. But necessary. When client data and commercial exposure are involved, experimentation has consequences.

And while the ship turns, you are still delivering.

Deadlines have not paused. Clients have not relaxed expectations. Your workload has not become lighter simply because AI is under review.

So the real question is not what your organisation is doing. It is what you are doing.

Three roles Delivery Leaders adopt in response to AI

In reality, most Delivery Leaders fall into one of three distinct roles when navigating AI uncertainty. As you read them, notice which one feels familiar.

1. The Policy Purist

You have decided not to engage with AI until your organisation releases an approved solution. You are waiting for the official tooling, the governance sign-off and the internal training.

This stance is principled. It protects you from accidental exposure and keeps you fully aligned with policy.

But there is a trade-off. While you wait, others are building literacy. They are learning how prompts shape output, where AI genuinely adds value and where it confidently invents nonsense. When enterprise tools eventually arrive, those who have developed that understanding will adapt faster.

Compliance is preserved. Momentum slows.

2. The Shadow AI Operator

You are experimenting. Not recklessly. Not carelessly. Pragmatically.

You use AI to structure updates, refine tone or sense-check complex explanations. You intend to sanitise data first. But without structured, repeatable exports, good intentions collapse under time pressure. You copy, paste and move on.

Most of the time, nothing happens.

But it only takes one overlooked column, one hidden data field or one client reference for a small shortcut to become a serious issue.

This approach delivers short-term speed, but carries long-term risk.

3. The Strategic Delivery Architect

You accept that AI is here. You respect that governance takes time. And you refuse to sit still in the meantime.

You are not waiting passively for enterprise tooling. And you are not experimenting loosely under deadline pressure.

Instead, you focus on control. You design your Delivery environment so that when AI is used, it is used safely and intentionally. Risk is reduced because structure comes first.

And when the official enterprise stack finally lands, you are not starting from zero. You already have clean data, disciplined workflows and repeatable exports. You are ready to move faster because you did the groundwork early.

Speed without discipline creates exposure. Preparation creates both protection and readiness.

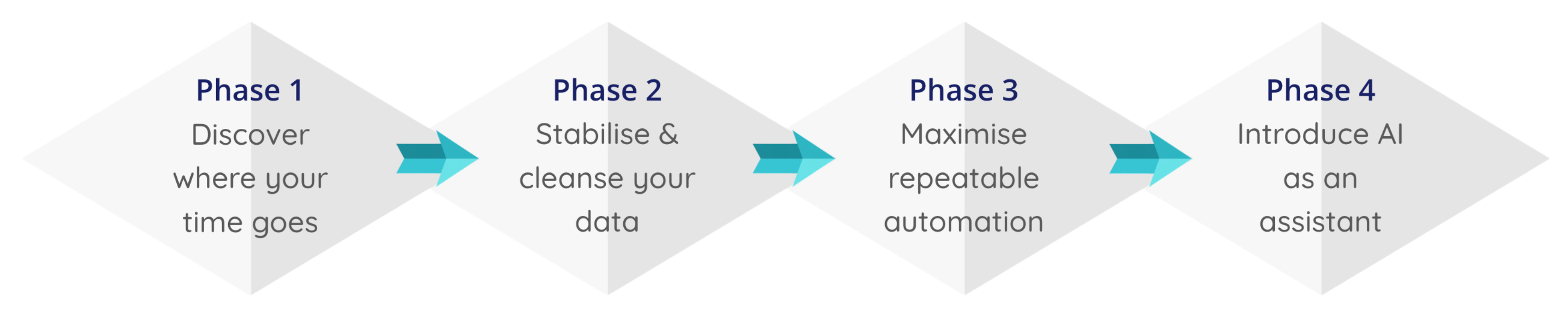

A structured path to using AI properly

This model is for the Delivery leader who wants to use AI properly, within organisational boundaries, and be able to defend that decision if challenged.

Phase 1: Discover where your time actually goes

Before introducing any new technology, you need visibility.

Most Delivery leaders underestimate how much of their week disappears into repeatable admin. Audit your workload over a defined period and look at it from a few different angles:

- Where is the repetition?

- Where is the friction?

- Where is the work rules-based rather than judgement-based?

- Where does data sensitivity introduce risk?

When you map your work in this way, patterns start to appear. Some tasks are ideal for automation. Some are suitable for AI assistance. Others must remain human.

You cannot optimise what you have not measured.

I’ve built an interactive Google Sheet called the Delivery AI Readiness Prioritiser. You input your own workload, and it applies a scoring model that balances time impact, frustration, risk and automation potential, ranking your priorities, recommending where to start and highlighting when risk outweighs time savings.

Phase 2: Stabilise and cleanse your data

Once you understand where the time drains are, fix the system you already run Delivery on.

Whether that is Jira, Azure DevOps, Monday, Asana or something else, your core platform is the foundation everything else depends on. If the data inside it is inconsistent, incomplete or loosely structured, reporting becomes unreliable and decision-making suffers.

Focus on fundamentals like:

- Standardising status definitions across teams

- Removing duplicate or redundant custom fields

- Replacing free-text reporting fields with controlled dropdowns

- Aligning issue hierarchy structures

- Enforcing consistent naming conventions

- Cleaning incomplete or stale records

The goal is simple. Remove ambiguity. Make your reporting trustworthy. Without this foundation, nothing else will scale.

Phase 3: Maximise rule-based automation

Once your Delivery platform is structured, the next step is to actually use the capability that is already sitting inside it.

Most Delivery systems come with built-in automation. The issue is rarely missing functionality. It is that teams only scratch the surface of what those tools can already do.

Rule-based automation is straightforward. You define a condition, you define the action that should follow, and the system executes it consistently. Every time that condition is met, the same outcome happens. No judgement. No interpretation. Just disciplined, predictable behaviour.

In practice, that looks like things such as:

- Trigger reminders for overdue work

- Enforce mandatory fields through validation rules

- Auto-assign work based on team or component

- Generate one-click exports of anonymised reporting views

- Or better still, replace recurring manual reports with automated dashboards and scheduled summaries

This is not flashy. It is foundational. And when configured properly, it removes repetitive admin, reduces human error and protects the integrity of your reporting.

Phase 4: Introduce AI as an assistant

Once your data is structured, your reporting is live and your rule-based automation is handling the predictable work, AI can sit on top of it.

Used properly, it can:

- Draft a weekly update from structured reporting

- Summarise a retrospective into clear themes

- Turn messy meeting notes into defined actions

- Refine stakeholder messaging before it goes out

It works best when the inputs are already clean.

What it should not do is touch live operational records, ingest raw client data or override governed systems. Those remain inside your controlled environment.

AI is a thinking partner, not a system of record.

Used this way, it saves time without increasing exposure. It sharpens clarity instead of introducing risk.

And because you have already done the groundwork, you are using it deliberately, not desperately.

The real decision

AI will enter your Delivery environment one way or another. It may arrive through a formal enterprise rollout with governance documentation and structured training. Or it may appear during a pressured week when you are trying to maintain momentum and you reach for a tool that promises speed.

The decision is not whether AI is coming. The decision is whether you introduce it deliberately or allow it to seep in reactively.

If you have stabilised your data, tightened your workflows and designed controlled reporting exports, you are not waiting helplessly for the cruise ship to finish turning. You can begin using AI within clear boundaries, without exposing sensitive information or compromising trust. The groundwork you have already done becomes the safeguard.

This is the difference between shadow AI and structured AI use. One relies on urgency and good intentions. The other is designed, controlled and defensible.

When the official enterprise AI stack eventually lands, you are not starting from zero. You already have clean data, disciplined systems and clarity around what should and should not leave your environment. That preparation gives you both protection and readiness.

In Delivery, speed alone rarely wins. Structure is what compounds.